Pragmatic Testing in Convoluted Times

A pragmatic testing approach for this new, rising, and yet convoluted software development paradigm.

A pragmatic testing approach for this new, rising, and yet convoluted software development paradigm.

Exploring AI-driven workflows with an MCP-powered blog. The starting point of an AI partnership to build my own blog.

Testing strategy is one of those topics that never really settles. The same discussions resurface again and again—during code reviews, architecture sessions, debates about coverage. I’ve had these conversations countless times, often finding myself explaining the same mental model, the same trade-offs, the same reasoning.

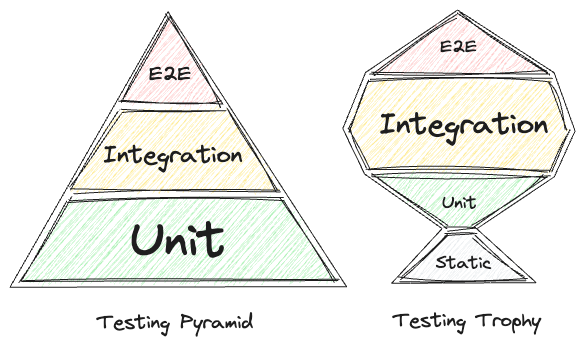

The testing Pyramid is probably the first image that comes to mind for most software engineers when talking about testing. But that picture has been questioned many times. To mention a few: Kent C. Dodds famously proposed the Testing Trophy, with the advice “Write tests. Not too many. Mostly integration.” Saša Jurić wrote about testing at the interface level in Elixir applications. Even Martin Fowler, in his second note on the Test Pyramid, observes: “If my high-level tests are fast, reliable, and cheap to modify, then lower-level tests aren’t needed.”

This image shared by Tomasz Ducin on X is a great side-by-side comparison of the two big figures on the testing spectrum:

Over time, I realized I was missing something simple: a place to point to. A document that captured how I think about testing—not as a universal truth, but as a working model shaped by real systems and real constraints. This post is that reference. For others, maybe. For myself, definitely.

What I’m writing below might be controversial. It challenges the well-known testing Pyramid, goes a step beyond the Trophy, and grows out of the many back-and-forth discussions we’ve all had: how much to unit test, where integration tests fit, what coverage really tells us, and so on.

The Trophy resonates with me as a mental model, and I take it one step further by leaning into pragmatism.

It wouldn’t be fair though, going further without mentioning that, this approach didn’t really click for me until the last 5 years of my development experience. There I had the pleasure to meet Elixir, and discover its architecture and tooling that made the feasibility of this model clear for me in real production systems.

What I’m proposing is a simplification for the average application. Take the Trophy, remove the debates about test distribution, and you’re left with a single primary testing layer for business logic: the boundary. This removes paralysis from the equation. Test there. Fill gaps when necessary. Move forward.

The approach is straightforward and simple: tests should live at the outermost acceptable edge of the system. One single level of integration. The test begins where input enters and asserts on the final state. The full journey.

A couple of examples:

For an API, the test starts with an HTTP request and asserts on both the response and the database state.

Given a user in this state, when they hit this endpoint with this payload, then these records exist and this response is returned.

For a stream processor, the same idea applies.

Given this database state, when this event arrives, then these records are created or updated and these side effects occur.

The entry point doesn’t matter. Web request, background job, event stream, CLI command—find the boundary and test there.

Mocking still has a place. It’s useful for simulating failure scenarios that are otherwise hard to reproduce: timeouts, unexpected errors from external services, partial outages. But it should be the exception, not the default.

The boundary can be intentionally squeezed—this is where pragmatism comes in—and this is where unit tests can still have their place. This helps keeping the setup manageable or avoiding unacceptable overhead. In those cases, unit tests can fill the gap between the real entry point and the start of the boundary.

This approach is not laziness. It’s a conscious decision about where to draw the line.

Testing at the boundary means testing what actually matters: the behavior that users and other systems depend on. Everything else—the internal module structure, helper functions, data flow—is an implementation detail. Important, yes. But not the contract.

Seamless refactoring.

This is the biggest win. When tests only care about boundaries, you can freely restructure internals. Rename modules, extract services, change data flow. As long as the contract holds, tests stay green.

Clear business rules.

Each test reads like a specification: given this state, when this happens, then this is the result. Test files become living documentation of what the system does—not how it happens to be wired today.

A holistic view of the system.

You exercise the real path—the same one production will take. No more situations where all unit tests pass but production breaks because the integration between A and B was never tested.

More meaningful coverage.

When a code path is covered, it’s because a real scenario exercises it—not because a helper function was called in isolation.

A simpler testing approach.

One layer means one setup style, one set of helpers, one mental model. Less ceremony, less maintenance.

“Integration tests are slow.”

Historically true. Much less so today. Tooling has improved dramatically, and in some ecosystems—Elixir being a standout example—this objection largely disappears.

That said, there’s still overhead. Spinning up a database, seeding state, exercising the full path—it’s not free. The outcome depends heavily on the stack and the application. If this approach leads to unacceptably long test times, try squeezing the boundary one step in. If it’s still unacceptable, this model might not apply to your case. Still, for the average application, the overhead is usually worth the increased confidence and reduced maintenance.

“You’ll get a combinatorial explosion of scenarios.”

In practice, most business logic has a limited set of meaningful boundary-level scenarios. When it doesn’t, consider whether the boundary itself can be made lighter before falling back to unit tests. If you still end up with an unmanageable number of cases, extract that logic into a pure module and unit test it. Unit testing here is a fallback, not the default.

“You won’t know where the bug is.”

True. But it’s better to know that something important is broken than to have a sea of green tests hiding broken behavior. Today, AI-assisted debugging also makes root-cause analysis far cheaper.

“Unexpected dependency issues are hard to test.”

True, but there’s solid tooling for this. When external dependencies behave unpredictably—timeouts, API failures, partial outages—you can use mocks to enforce the context and rely on telemetry or message passing to assert the outcome.

“These tests become complex and hard to read.”

Readability is an implementation detail. Extract helpers, build factories, create domain-specific test utilities. Setup complexity doesn’t invalidate the approach—it just means your test code needs better abstractions.

“It’s hard to get 100% coverage of internal components.”

True. But if a code path isn’t exercised through the boundary, it’s not being used in practice. That’s a signal—either the code is dead or a real scenario is missing. If the path is genuinely needed but awkward to test, zoom out and improve your test abstractions. Test effort should follow real usage, not coverage numbers.

Application code and libraries are different beasts.

For libraries—especially public ones—the testing Pyramid still makes perfect sense. Stable APIs, exhaustive edge-case coverage, clear contracts. Unit tests are the specification.

The same applies to internal shared tooling with multiple consumers.

My rule of thumb:

There’s a growing concern that AI-assisted development might compromise quality assurance. Precisely because of that, the rise of AI makes testing more important than ever—and this approach to testing is particularly well suited for it.

When AI writes code, boundary tests become your specification. They define the contract that must hold, regardless of how the implementation evolves. The test says: given this input, the system must produce this output. How it gets there is up to the AI.

When a bug appears in the application, this model fits naturally: you add a new boundary-level integration test that captures the broken behavior. Once the test fails, the fix is as simple as letting AI iterate on the implementation until the test passes. The test becomes the anchor that guides the change.

This shifts developer focus from reviewing implementation details to defining correct behavior. Instead of scrutinizing every line of generated code, you verify that the system behaves as intended. The tests become the source of truth.

Does this mean architecture doesn’t matter? Not at all. Good architecture is still essential for humans to understand and maintain the system. We shouldn’t become blind to internal structure. But that’s meat for another post.

What matters here is this: when AI can regenerate implementations quickly, tests become your quality guardrails. They let you move fast without breaking things. They give you permission to let AI iterate freely on internals while maintaining confidence that the contract holds.

In a world where code is produced 10× faster, boundary tests are the thing you trust.

This post is a foundational statement of my own thinking about pragmatic testing. I truly believe it can help navigate an emerging yet convoluted paradigm that’s rising in software development, where acceleration is everywhere.

I also believe Elixir and pragmatic testing come together with a well-balanced trade-off across delivery speed, quality assurance, and developer happiness.

Next, I’m looking forward to cover the how: concrete Elixir examples—APIs, stream processors, MCP servers, and more.

This post was created as part of an experiment in AI-augmented content creation. The thoughts are mine, the words are collaborative, and the publishing is seamless.

Have comments, feedback, or questions? Feel free to contact me using the MCP integration and I'll be happy to answer back.